Machine learning, augmenting or replacing effort, utilizes devices with moving parts; a probabilistic perspective offers a robust framework for understanding algorithms and data․

Overview of the Book “Machine Learning: A Probabilistic Perspective”

“Machine Learning: A Probabilistic Perspective” meticulously details a unique approach, framing machine learning not as a collection of algorithms, but as a robust extension of probability theory․ The book emphasizes Bayesian methods, offering a cohesive and mathematically rigorous treatment of core concepts․ It delves into how machines, as thermodynamic systems, utilize power to perform actions, mirroring human or animal effort․

This text explores diverse machine types – from simple levers and pulleys to complex devices seen on Mars – illustrating the augmentation of physical tasks․ It’s a comprehensive guide, covering model selection, evaluation, and advanced topics like graphical models, providing a solid foundation for both beginners and experienced practitioners seeking a deeper, probabilistic understanding․

The Importance of a Probabilistic Approach

A probabilistic approach to machine learning is crucial because real-world data is inherently uncertain․ Unlike deterministic models, probabilistic methods quantify this uncertainty, providing not just predictions, but also confidence levels․ This is vital for informed decision-making, especially in critical applications․ Machines, designed to augment human effort, benefit from this nuanced understanding․

Considering machines as systems applying forces and controlling movement necessitates acknowledging inherent variability․ Bayesian inference, central to this perspective, allows for updating beliefs based on observed evidence․ This framework handles missing data effectively and facilitates robust model comparison, utilizing tools like AIC and BIC, ultimately leading to more reliable and adaptable machine learning solutions․

Foundational Concepts

Probability theory basics, Bayes’ Theorem, and Maximum Likelihood Estimation form the core, enabling the quantification of uncertainty within machine systems and data․

Probability Theory Basics

Probability theory is fundamental to a probabilistic approach in machine learning, providing a mathematical framework for dealing with uncertainty․ It defines events and their likelihood of occurrence, utilizing concepts like sample spaces and probability distributions․ Understanding random variables – discrete or continuous – is crucial, alongside joint and conditional probabilities․

Key principles include the axioms of probability, ensuring probabilities are non-negative and sum to one․ The product rule allows calculating joint probabilities, while Bayes’ theorem, a cornerstone, enables updating beliefs given evidence․ These basics underpin model building, allowing for quantifying confidence in predictions and handling noisy data effectively․ A solid grasp of these concepts is essential for navigating the complexities of machine learning algorithms․

Bayes’ Theorem and its Applications

Bayes’ Theorem is a cornerstone of probabilistic machine learning, elegantly describing how to update the probability of a hypothesis based on observed evidence․ Formally, P(A|B) = [P(B|A) * P(A)] / P(B), it relates the posterior probability P(A|B) to the prior P(A) and likelihood P(B|A)․

Its applications are vast, including spam filtering (classifying emails), medical diagnosis (assessing disease probability), and machine translation․ In machine learning, it forms the basis for Bayesian classifiers like Naive Bayes, enabling probabilistic predictions․ Furthermore, it’s integral to Bayesian inference, allowing for model parameter estimation and uncertainty quantification․ Understanding Bayes’ Theorem is crucial for building robust and interpretable machine learning models․

Maximum Likelihood Estimation (MLE)

Maximum Likelihood Estimation (MLE) is a fundamental method for estimating the parameters of a statistical model․ The core principle involves finding the parameter values that maximize the likelihood function, representing the probability of observing the given data․ Essentially, MLE seeks the parameters that make the observed data “most probable”․

In machine learning, MLE is widely used to train models by adjusting parameters to best fit the training data․ For example, in linear regression, MLE determines the coefficients that minimize the sum of squared errors․ While powerful, MLE can be sensitive to outliers and may require regularization techniques to prevent overfitting․ It’s a key component in many probabilistic modeling approaches․

Supervised Learning

Supervised learning employs labeled data to train models, predicting outputs based on inputs; devices augment human effort for physical tasks and control․

Linear Regression from a Probabilistic Viewpoint

Linear regression, traditionally viewed through optimization, gains deeper insight with a probabilistic lens․ Instead of solely finding the line minimizing squared error, we model the target variable as having a Gaussian distribution centered around the linear prediction․ This allows us to quantify uncertainty in our predictions, providing not just a point estimate but a probability distribution․

The parameters of this Gaussian – the mean and variance – are then estimated using techniques like Maximum Likelihood Estimation (MLE)․ This probabilistic framing naturally extends to handling noisy data and provides a foundation for more complex models․ Considering the machine as a system applying forces, linear regression becomes a method to predict the outcome of these forces․

Furthermore, this perspective facilitates Bayesian linear regression, incorporating prior beliefs about the parameters and updating them with observed data, leading to more robust and informed predictions․

Logistic Regression and Generalized Linear Models

Logistic regression, for binary classification, extends the probabilistic approach․ Instead of predicting a continuous value, we model the probability of belonging to a specific class using the sigmoid function․ This function maps any real value to a probability between 0 and 1, representing the likelihood of a positive outcome․

This naturally fits within the Generalized Linear Model (GLM) framework, which allows us to model various types of response variables – not just Gaussian, but also binary, count, or categorical data – by linking them to a linear predictor through a link function․

Like linear regression, parameters are estimated via MLE, maximizing the likelihood of observing the given data․ The machine, as a device augmenting human effort, benefits from GLMs’ flexibility in modeling diverse real-world scenarios․

Naive Bayes Classifier: Principles and Implementation

The Naive Bayes classifier embodies a simple yet effective probabilistic approach to classification․ It applies Bayes’ Theorem with a “naive” assumption of feature independence – meaning it assumes features are conditionally independent given the class․ Despite this simplification, it often performs surprisingly well, especially in high-dimensional spaces․

Implementation involves calculating prior probabilities for each class and likelihoods of features given each class, derived from training data․ Prediction then uses Bayes’ Theorem to compute the posterior probability of each class for a new instance․

This machine, a device augmenting human effort, is computationally efficient and easy to implement, making it a valuable baseline model․ Its simplicity belies its power in various applications․

Support Vector Machines (SVMs) with Probabilistic Outputs

Support Vector Machines (SVMs), powerful devices augmenting analytical effort, traditionally provide hard classification decisions․ However, obtaining probabilistic outputs – estimates of class membership confidence – is often crucial․ This is achieved through methods like Platt scaling, which fits a logistic regression model to the SVM’s decision values․

Platt scaling transforms the SVM’s output into a probability score, allowing for more informed decision-making․ Other techniques involve using sigmoid functions to map the decision function to a probability․ These methods provide a probabilistic interpretation of the SVM’s margin-maximizing approach․

The machine’s ability to provide confidence levels enhances its utility in risk-sensitive applications․

Unsupervised Learning

Unsupervised learning, a device augmenting data insight, explores patterns without labeled examples; probabilistic models like Gaussian Mixture Models reveal underlying structures․

Gaussian Mixture Models (GMMs)

Gaussian Mixture Models (GMMs) represent a powerful probabilistic approach to clustering and density estimation․ They assume data points are generated from a mixture of several Gaussian distributions, each with its own mean and covariance․ This allows for modeling complex, multi-modal data distributions that a single Gaussian cannot capture effectively․

The core idea is to assign each data point a probability of belonging to each Gaussian component․ Parameters – means, covariances, and mixing coefficients – are typically learned using the Expectation-Maximization (EM) algorithm․ GMMs are widely used in various applications, including image segmentation, speech recognition, and anomaly detection․ They provide a flexible and interpretable framework for uncovering hidden structure within data, offering a probabilistic assignment rather than hard clustering․

Clustering with Probabilistic Models

Probabilistic models offer a nuanced approach to clustering, moving beyond hard assignments to provide probabilities of data points belonging to each cluster․ Unlike traditional methods like k-means, these models quantify uncertainty, reflecting the inherent ambiguity in cluster membership․ Gaussian Mixture Models (GMMs) are a prime example, representing clusters as Gaussian distributions and utilizing EM to estimate parameters․

Bayesian Gaussian Mixture Models (BGMMs) further enhance this by incorporating prior beliefs and providing posterior distributions over cluster assignments․ This allows for more robust and interpretable clustering results, particularly with limited data․ These models are valuable when understanding the confidence in cluster assignments is crucial, offering a richer understanding of data structure than deterministic approaches․

Model Selection and Evaluation

Evaluating models requires techniques like cross-validation and Bayesian comparison, alongside information criteria (AIC, BIC) to balance complexity and goodness-of-fit effectively․

Cross-Validation Techniques

Cross-validation is a crucial model evaluation method, systematically partitioning data into subsets for training and testing․ k-fold cross-validation divides the dataset into k equal parts; each fold serves as a test set once, while the remaining k-1 folds are used for training․ This process repeats k times, yielding k performance estimates․

Leave-one-out cross-validation (LOOCV) represents an extreme case where k equals the number of data points, providing a nearly unbiased estimate but being computationally expensive․ Stratified cross-validation maintains class proportions in each fold, vital for imbalanced datasets․ These techniques offer robust assessments of a model’s generalization ability, mitigating overfitting and providing reliable performance metrics for informed model selection․

Bayesian Model Comparison

Bayesian model comparison offers a principled approach to selecting the best model from a set of candidates, utilizing Bayes’ theorem to calculate the posterior probability of each model given the observed data․ This contrasts with frequentist methods relying on p-values․ The posterior probability reflects the model’s plausibility, considering both its fit to the data and a prior belief about its complexity․

Calculating the Bayes factor, the ratio of marginal likelihoods, quantifies the evidence supporting one model over another․ A higher Bayes factor indicates stronger evidence․ This method inherently penalizes model complexity, preventing overfitting․ Bayesian comparison provides a comprehensive framework for model selection, incorporating prior knowledge and quantifying uncertainty․

Information Criteria (AIC, BIC)

Information criteria, like Akaike Information Criterion (AIC) and Bayesian Information Criterion (BIC), provide methods for model selection balancing goodness-of-fit with model complexity․ AIC estimates the relative information lost when a given model is used to represent the process that generated the data, favoring models that minimize this loss․

BIC, derived from Bayesian principles, imposes a heavier penalty on model complexity than AIC․ This makes BIC more conservative, often selecting simpler models․ Both criteria approximate the Bayes factor without requiring full Bayesian computation․ Lower AIC or BIC values indicate a better model․ They are widely used for comparing non-nested models and offer a practical alternative to full Bayesian model comparison․

Advanced Topics

Advanced topics delve into graphical models, Bayesian networks, and Hidden Markov Models, employing approximate inference techniques like Variational Inference and MCMC․

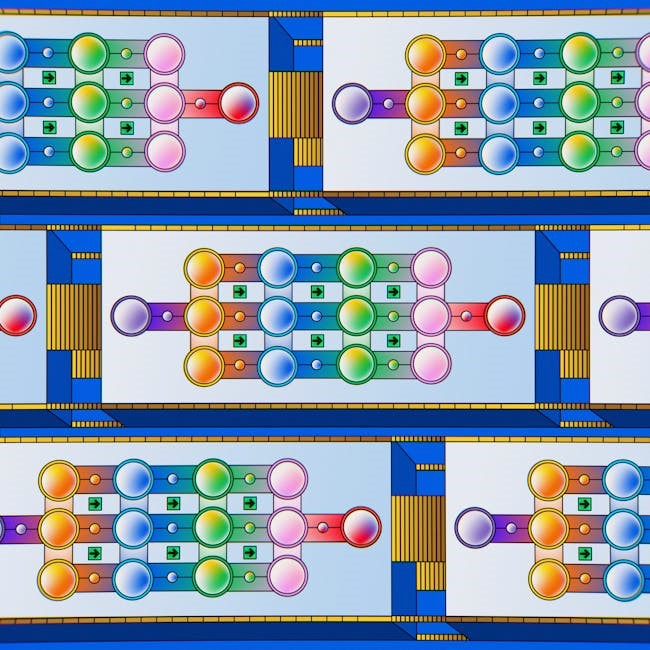

Graphical Models and Bayesian Networks

Graphical models provide a powerful visual and mathematical framework for representing complex probabilistic relationships among variables․ These models utilize graphs where nodes represent variables and edges depict dependencies․ Bayesian networks, a specific type of graphical model, employ directed acyclic graphs to illustrate causal relationships and facilitate probabilistic inference․

They are instrumental in reasoning under uncertainty, allowing us to update beliefs as new evidence becomes available․ Utilizing Bayes’ Theorem, these networks efficiently compute posterior probabilities, crucial for tasks like diagnosis and prediction․ The structure of the network encodes expert knowledge or is learned from data, enabling effective modeling of intricate systems․ They are widely applied in areas like medical diagnosis, spam filtering, and risk assessment, offering a clear and interpretable approach to probabilistic modeling․

Hidden Markov Models (HMMs)

Hidden Markov Models (HMMs) are statistical models used to represent systems assumed to be Markovian – meaning the future state depends only on the present state․ However, the system’s state is ‘hidden’, observed through a series of emissions․ These emissions are probabilistically linked to the hidden states․

HMMs are particularly effective in modeling sequential data where the underlying process isn’t directly visible․ Key applications include speech recognition, where hidden states represent phonemes and emissions are acoustic signals, and bioinformatics, analyzing DNA sequences․ Algorithms like the Viterbi algorithm efficiently determine the most likely sequence of hidden states given observed emissions․ Parameter estimation, using techniques like the Baum-Welch algorithm, learns the model from training data, making HMMs a versatile tool for sequential data analysis․

Approximate Inference Techniques (Variational Inference, MCMC)

Many probabilistic models encountered in machine learning lack closed-form solutions for inference – calculating posterior distributions․ Approximate inference techniques provide methods to estimate these distributions․ Variational Inference (VI) transforms the inference problem into an optimization one, finding a simpler distribution that closely approximates the true posterior․

Conversely, Markov Chain Monte Carlo (MCMC) methods construct a Markov chain whose stationary distribution is the posterior․ Algorithms like Metropolis-Hastings and Gibbs sampling fall under MCMC․ While MCMC can be more accurate, it’s often computationally expensive․ VI offers speed but may sacrifice accuracy․ Choosing between them depends on the model’s complexity and the desired trade-off between precision and computational cost, crucial for practical applications․

Practical Considerations

Real-world machine learning often involves missing data and requires careful feature engineering and selection to build robust, accurate, and reliable predictive models․

Dealing with Missing Data

Missing data presents a significant challenge in machine learning․ A probabilistic perspective allows for principled handling, moving beyond simple deletion or imputation․ Ignoring missingness can introduce bias, while naive imputation can distort distributions․ Techniques like multiple imputation, leveraging the probabilistic model, offer more robust solutions․

Considering the underlying mechanisms causing missingness – Missing Completely at Random (MCAR), Missing at Random (MAR), or Missing Not at Random (MNAR) – is crucial․ Probabilistic models can explicitly incorporate these mechanisms․ Furthermore, utilizing techniques like data augmentation, informed by the probabilistic framework, can help mitigate the impact of incomplete datasets, leading to more reliable and generalizable machine learning models․

Feature Engineering and Selection

Feature engineering and selection are vital for building effective machine learning models․ A probabilistic perspective guides these processes by emphasizing informative features that improve model accuracy and interpretability․ Creating new features often involves transforming existing ones, guided by domain knowledge and probabilistic assumptions about the data․

Feature selection, conversely, aims to identify the most relevant subset of features․ Bayesian methods, utilizing prior distributions and likelihood functions, provide a natural framework for assessing feature importance․ Techniques like feature weighting and variable selection within probabilistic models help reduce dimensionality, prevent overfitting, and enhance model generalization․ Careful consideration of feature interactions, informed by probabilistic reasoning, further optimizes model performance․

Resources and Further Learning

To deepen your understanding of machine learning from a probabilistic perspective, several resources are invaluable․ Online courses from platforms like Coursera and edX offer comprehensive coverage of the subject matter, often referencing key texts․ The original book, “Machine Learning: A Probabilistic Perspective,” remains a cornerstone for advanced study, providing rigorous mathematical foundations․

Exploring related fields like Bayesian statistics and graphical models expands your toolkit․ Numerous research papers and open-source implementations are available online, fostering practical application․ Engaging with the machine learning community through forums and conferences provides opportunities for collaboration and knowledge sharing․ Remember that continuous learning is crucial in this rapidly evolving domain․